By John Gruber

WorkOS launches auth.md — an open protocol for agent registration.

- Apple OS 27: The Small Things ★

-

Rishi Ó:

My favorite Apple updates are not the flashy new features, but the quiet little touches: annoyances fixed, workflows made smoother, rough edges sanded down, and longstanding flaws thoughtfully reworked. To me, they’re the clearest sign of a company that cares about its craft.

Here’s a collection from a WWDC26 screen-grab, organized for easier reading, on improvements coming later this year.

That’s a lot of bullet points.

- The Talk Show Live From WWDC: Tonight, In-Person and Streaming ★

-

If you can make it in person, you should come. The California Theater is a beautiful big theater and tickets are still available.

You can also watch tonight’s show in live stereoscopic immersive in the Theater app from Sandwich Vision on Vision Pro. A purchase of the ticket to the live show, the Theater app for $12.99, is also good for replay forever — with surprise bonus features included. It’s a fun, truly immersive way to experience the show.

Hope to see you there tonight, one way or the other.

- Apple WWDC 2026 Keynote ★

-

A brisk 76 minutes, including the post-credits Easter egg music video. The past few years ran about a half hour longer.

- Apple’s WWDC AI Demos Were Real and in Real Time ★

-

Julie Bort, TechCrunch:

But the most telling detail wasn’t what Apple announced. It was how it chose to show some things off. Many of the Apple Intelligence demoes featured someone standing, phone in hand, pressing buttons or using voice commands in real time, while another camera showed off the phone’s response.

These weren’t live onstage, anything-could-go wrong demos; they were pre-taped. But they looked far more like proof of working features than what Apple showed at WWDC 2024, when the company unveiled Apple Intelligence and a new Siri to the world through slickly produced videos that turned out to be more promise than product.

The demos were all shot in single takes, with no editing. In fact, I think most of them were single takes of multiple demos back-to-back. That’s the way it should be, even when they feel a little slow. When a demo feels slow, the solution isn’t to edit the video — it’s to make the feature work faster.

- Apple Introduces Siri AI ★

-

Apple Newsroom yesterday:

This new version of Siri is built on Apple Intelligence, allowing Siri to draw on personal context understanding and help users find what they need in the moment across messages, emails, photos, and more. For example, users can ask Siri to find a restaurant recommendation a friend messaged them about, surface a hotel confirmation number from an old email, or pull up photos with friends and family from a recent trip. And personal context understanding extends to third-party apps when developers integrate with Spotlight.

With even more systemwide app actions, Siri AI lets users get things done across apps, like drafting an email from scratch, or editing and sharing a set of photos. Using onscreen awareness, Siri AI can answer questions related to the content on a user’s screen. For example, if a user gets a text about a potluck with friends, they can brainstorm with Siri on what to bring and then add a recipe to the Notes app.

In addition, Siri AI can use broad world knowledge to get up-to-date information from the web on virtually any topic and generate a helpful answer, such as when and where to see the next solar eclipse, or when a musician is coming to town. Users can extend almost any response from Siri into a rich conversation and ask follow-up questions.

I like the name “Siri AI”. “New Siri” wouldn’t have legs because eventually this won’t be new. This should be the dividing line between Siri as we know it and Siri as it should be. The demos I’ve seen so far (I still don’t have access on my iOS 27 testing device) are impressive. Well, impressive compared to old Siri. They’re table stakes for generative AI. But Siri AI is the only system that can draw upon your personal data in the apps on your devices, and perform actions based on the app intents supported by the apps on your devices. It is in some ways less capable than ChatGPT or Claude, but in other ways has more potential. It’s a very different approach and I think it’s the right one for Apple.

They need to execute, they need to prove this can scale, and most of all, they need to get third-party apps on board with App Intents and App Schemas. But it seems like they’re doing all of that. This is not a done deal but it is very realistic.

- Apple’s WWDC Announcement of the New Apple Intelligence System ★

-

Apple Newsroom:

These new capabilities are powered by the next generation of Apple Foundation Models, custom-built in collaboration with Google and its Gemini models for deeply integrated Apple Intelligence experiences. These latest models run on device and on servers using Private Cloud Compute.

Every facet of the new Apple Intelligence architecture is built privacy-first, from the latest Apple Foundation Models to the core operating system technologies that integrate these models deep into Apple’s platforms. Apple Intelligence uses on-device processing and Private Cloud Compute to help protect users’ privacy. Private Cloud Compute gives users access to frontier-level intelligence, while extending the privacy and security of iPhone into the cloud.

What’s confusing about this Apple-Google partnership is that Google pretty much calls all things AI “Gemini”. The models are “Gemini”, the assistant is “Gemini”, and the feature integrations are “Gemini”. So Apple is taking pains to emphasize that they’re building atop the Gemini models, not the Gemini assistant.

One way to think about it is this. Let’s say you’re a Google Gemini app user. That’s the assistant. Now you start using the new Apple Intelligence (that builds atop the Gemini models) and the new Siri AI (that builds atop the new Apple Intelligence). When you go back to the Google Gemini app, nothing you did using Apple Intelligence and Siri AI is visible to the Gemini app. And nothing you continue to do in the Google Gemini app is visible to Apple Intelligence or Siri AI.

Monday, 8 June 2026

- From the Annals of People Having Knowledge of the Matter, Siri AI Extensions Edition ★

-

Mark Gurman, reporting (?) for Bloomberg two short months ago:

Apple Inc. plans to open Siri to outside artificial intelligence assistants, a major move aimed at bolstering the iPhone as an AI platform. The company is preparing to make the change as part of a Siri overhaul in its upcoming iOS 27 operating system update, according to people with knowledge of the matter. The assistant can already tap into ChatGPT through a partnership with OpenAI, but Apple will now allow competing services to do the same.

The company is developing new tools to allow AI chatbot apps installed via the App Store to integrate with the Siri assistant, said the people, who asked not to be identified because the plans haven’t been announced. The chatbots will also work with an upcoming Siri app and other features in the Apple Intelligence platform.

That means, for instance, if users have Alphabet Inc.’s Google Gemini or Anthropic PBC’s Claude installed, they’d be able to send queries to those services from within the Siri voice assistant, just like they have been able to with ChatGPT since Apple Intelligence launched in 2024.

Maybe Apple ran out of time today, and will announce this tomorrow? Maybe they forgot to announce it? Maybe they scrapped the next-generation Siri that existed two months ago and in the last month rebuilt another entirely new next-generation Siri? I’ll bet something like that is what happened.

I mean, people had knowledge of the matter.

Sunday, 7 June 2026

- Mux — Video for Developers ★

-

My thanks to Mux for sponsoring last week at DF. Mux is what developers reach for when they need to do more with video. Video files are packed with data and context waiting to be unlocked.

Mux Robots are AI workflows that unlock that data inside your video for summarization, caption translation, moderation, and more. Configure once and your workflows run automatically on new uploads.

Mux is video infrastructure trusted by Patreon, Substack, and Synthesia. Start building for free. Use code FIREBALL at signup for an extra $50 credit.

SwiftUI Only Makes It Easy to Develop Bad Apps

Sunday, 7 June 2026

Paulo Andrade, last month, “Using SwiftUI to Build a Mac-Assed App in 2026”:

I recently launched the macOS version of Shopie, an app I first released on the iOS App Store late last year. Shopie helps you keep track of products you’re interested in by letting you create wishlists and notifying you whenever a product’s price, availability, and other details change.

Unlike my other apps, where I typically blend AppKit (or UIKit) with SwiftUI, Shopie is built entirely in SwiftUI. I wanted to keep it that way to maximize code reuse across iOS, iPadOS, and now macOS. This post explores how far SwiftUI can take you on the Mac in 2026, especially if your goal is to build an app that feels truly native to the platform. It’s not meant to be an exhaustive review of SwiftUI on macOS. It’s simply a collection of recipes and issues I ran into while porting Shopie, a fairly small app, and keeping it 100% SwiftUI.

Andrade’s examples are copious. His conclusion is damning:

Apple dropped the ball here. AppKit was ahead of its time and UIKit was a more polished version of AppKit. A serious cross-platform framework that unified the two should have happened long before SwiftUI. Instead, Apple left AppKit to fossilize and then tried to leapfrog the problem.

You can see the result everywhere. SwiftUI is productive, modern, and often delightful, right up until you try to make a really good Mac app. Then suddenly you’re fighting the framework for things the Mac solved 20 years ago.

There’s something really wrong with SwiftUI. Amongst the apps I use, the best example is Apple Journal. Basic stuff that’s worked reliably for decades — some things that heretofore had worked forever — are dangerously broken. If you’re running MacOS 26 Tahoe, open Journal and make a new dummy entry. Type something like “The quick brown fox.” Then double-click on the word “brown” and delete it. Now invoke Undo.

What you expect is for the word “brown” to reappear. What happens is ... the whole sentence disappears. Gone. Invoke Redo and you only get back to “The quick fox.” The word “brown” is just gone forever. It’s nowhere in the Undo stack. That’s just profoundly fucked up. I’ve never seen anything like this with an AppKit app, ever. (I’ve never seen it with a UIKit app either — and the same thing happens on iOS with Journal. It’s just that you notice it less often because we don’t invoke Undo and Redo nearly as often there.)

I actually use the Journal app and I’ve lost entire sentences of text to this incompetent implementation of Undo. Editing text in Journal is dangerous because SwiftUI is so bad at something as fundamental as text editing. AppKit has had this solved since 1989 or so, a decade before Apple reunified with NeXT. And my example here is just one of many. Andrade documents a whole bunch more in his post. [Shopie is a good modern Mac app — you can practically see from reading his post that Andrade’s hands are scarred from dozens of paper cuts.

So while the world is largely focused on Apple’s AI-related announcements at WWDC tomorrow, I’ve got SwiftUI (on all platforms) and Mac-assed Mac development high on my list. Apple’s developer message used to be that it was not just easy to develop apps for their platforms, but that it was easy to develop good idiomatically native apps. You got the correct complex behavior — for things like Undo/Redo — out of the box. That’s still true for AppKit and UIKit, but it’s never been true for SwiftUI, and SwiftUI is now seven years old. That’s too long for any excuses to hold water. ★

Sunday, 7 June 2026

- Alberto Romero on Apple’s AI Spending ★

-

Alberto Romero:

AI is like religion. Either you believe it changes everything, or you don’t believe at all. There is no moderate position; nobody believes in AGI “more or less,” just like nobody is “casually religious.” If God exists, the only coherent response is to reorganize your entire life around that fact, as priests do. If you pray sometimes, then you are just an atheist who’s also fearful. When tech companies spend hundreds of billions on capital expenditures to add sparkly AI features to Office, Gmail, and Instagram, I only see fearful atheists — guys who don’t believe in AI but pretend just in case.

In 2026, the four largest cloud and AI infrastructure providers — Amazon, Google, Meta, Microsoft — committed to spending $670 billion on CapEx. Apple, in contrast, spent $12.7 billion on capex last fiscal year and projects $14 billion for 2026, 2% of what its peers are spending. The conventional reading in Silicon Valley is, naturally, that Apple is losing. Siri has been a punchline for years — an internal executive called the delays ugly and embarrassing — and critics say that Apple has not been the same without Steve Jobs. It is falling behind, they say, and moving way too slowly for AI.

I disagree with this portrayal: Apple is the most powerful tech company in the world right now because it’s acting according to what it believes.

Some of you, I bet, will object to Romero’s notion that no one is “casually religious”. Almost everyone I know is casually religious, you might be thinking. But read the whole piece. What he’s saying is that if you’re “casually religious” those are just words. You’re not living your life according to your professed beliefs (casual or not). And that’s how most of Apple’s peer companies seem to be approaching AI.

I’m not sure he’s right, but he might be, and I think his take is at least closer to right than wrong. Apple is making an enormous bet on AI — but their bet is that they don’t need to spend hundreds of billions per year on AI infrastructure (most of it fattening Nvidia’s bottom line) to reap the benefits. If Apple’s right we should start seeing it come together tomorrow.

(Arguably we’ve already seen it coming together — demand for Apple’s products and services has gone up, not down, so far in the AI era. Entrenched leaders often grow during the initial stages of extinctive disruptions — BlackBerry’s biggest year for sales (revenue) and investor confidence (market cap) was 2011, four years after the iPhone debuted — but the disruptors are there. There’s not yet a single threat on the market to the iPhone, iPad, Mac, Apple Watch, or AirPods — nor to Apple’s services revenue.)

Saturday, 6 June 2026

- Halide Mark III ★

-

Ben Sandofsky, writing on the Lux Camera blog:

After decades of shooting digital, I returned to analog photography in 2023. I thought it would be challenging, given the limited selection of film stocks, only to be surprised by how freeing it felt. It felt so much better to have a handful of amazing choices rather than photo-editor with thousands of presets. We owe that to film engineers who spent years developing versatile film stocks that work in a variety of situations.

Inspired by “Less, but better,” we partnered with the renowned Hollywood colorist Cullen Kelly to develop a succinct set of gorgeous, physically accurate processes exclusive to Halide. Each look was engineered with a specific intent. We verified every look thousands of times on real-world reference photos.

Put another way: every look is a banger.

Halide has always been a great — maybe the great — iPhone camera app for shooting RAW, with the intention of developing your images by hand in post. It’s a great camera technically and a great app UI-wise. Mark II introduced Process Zero, which, in their own description, “uses zero AI and zero computational photography to produce beautiful, film-like natural photos”. Process Zero was the first step toward the new built-in “looks” in Halide Mark III. I’ve been shooting with Mark III for a few weeks now, and they are, indeed, all bangers. And I really like that there aren’t that many of them. I wanted more looks than just Process Zero (which remains available, of course), but I feel a bit overwhelmed when faced with a dozen (or worse, dozens) of choices for processing. I feel conflicted enough having to choose between a handful of really good third-party camera apps with which to shoot in the first place — it’s worse when I have to make too many choices within the camera app itself.

What I want is to just point and shoot and be able to instantly share images with the look I want already applied. I’m picky but I’m also really lazy, and don’t want to do any editing in post on most of the shots I keep. But I do want to be able to edit in post if I want to, including changing the look losslessly. This mixture of point-and-shoot ease and pro-level control didn’t use to be possible. Now, though, it is, with apps like Not Boring Camera, Analogue, and, now, Halide Mark III.

It’s been a turbulent couple of months for Lux (to say the least), so I’m glad to see Sandofsky and team get Mark III out the door. If you, like me, had previously been impressed by Halide but didn’t use it because it required too much work in post, you should check out Mark III.

- 60 Minutes Correspondents Lesley Stahl, Bill Whitaker, and the Other Guy Will Stay at Show ★

-

Lesley Stahl, Bill Whitaker, and Jon Wertheim, in a memo to the 60 Minutes staff obtained by The New York Times (gift links):

We have had a hard time deciding whether to stay at 60 Minutes. We’re still deeply upset by the firings of Tanya and Draggan, strong leaders who everyone respected. As far as we can tell — because no explanation has ever been offered, they were expelled because they fought for our 60 Minutes values and stood up to protect our independence and integrity.

Newsrooms are not supposed to be run like dictatorships. Collaboration and argument are the way we have always worked at 60. Don Hewitt actually encouraged loud passionate advocacy for our pieces. [...]

We feared that our returning might be construed as an endorsement of the existing power structure. That is simply, categorically not the case.

Here’s why we’re are staying:

We don’t want to see 60 Minutes die.

We’ll see how long this lasts.

- Trump Lawyer Argues Trump Can Tear Down Statue of Liberty ★

-

Josh Marshall:

In a hearing today about the president’s bulldozing of the East Wing of the White House and plans to build a vast ballroom, a judge asked if the president could also bulldoze the Statue of Liberty and be subject to no legal challenge. The DOJ lawyer, Yaakov Roth, said that yes, President Trump could decide tomorrow to bulldoze the Statue of Liberty and no one could stop him.

It was a good question from DC Court of Appeals Judge Patricia Millett since it brings the arguments and their implications clearly into the open. Reframe the question and the absurdity of this proposition becomes even more clear. If you hire someone to administer your estate, can they burn down the buildings on your estate or chop it up into parcels and sell it off? Presumably not. You hired them to run it, not to destroy it or sell it. It’s not theirs. They were hired for a specific task. That person is your employee. The president is hired to administer the country and enforce its laws for four years. He doesn’t own the country or its properties.

Pathetic lickspittles, one and all.

Friday, 5 June 2026

- Nieman Journalism Lab: Twitter/X Punishes Accounts That Post Links ★

-

Laura Hazard Owen, writing for Nieman Journalism Lab back in April:

I used Claude to help me scrape the 200 most recent tweets from 18 large publishers’ X accounts and track the engagement (likes + comments + retweets) on each. Six of those publishers have paywalls: Bloomberg, CNN, Forbes, The New York Times, The Wall Street Journal, and The Washington Post. Nine don’t: Al Jazeera English, AP, BBC, Breitbart News, CBS News, Daily Wire, Fox News, NBC News, and Reuters. The last three accounts I looked at — Leading Report, unusual_whales, and Globe Eye News — are not news publishers, but aggregate breaking news in tweets without links. (Here, for example, is an example of a Leading Report tweet: “BREAKING: Iran has halted direct talks with the US, per WSJ.” They’re sometimes referred to as engagement-maxing accounts.

These charts make it pretty clear that links in tweets hurt engagement. The connection was so apparent in my analysis that a graph including all 18 publishers is almost unreadable: The traditional, link-loving publishers are clustered in the bottom left corner (lots of links, little engagement) in a nearly indistinguishable mass of bubbles, no matter how large their followings are.

Musk’s Twitter/X is not an aggregator for news. It’s a walled garden. But the type of garden where you need to keep your eyes open and your hand on your wallet. Sometimes it’s fun to visit a seedy neighborhood. But let’s not pretend it isn’t a seedy neighborhood just because, long ago, it used to be nice.

- Elon Musk’s X Is a Freak Show ★

-

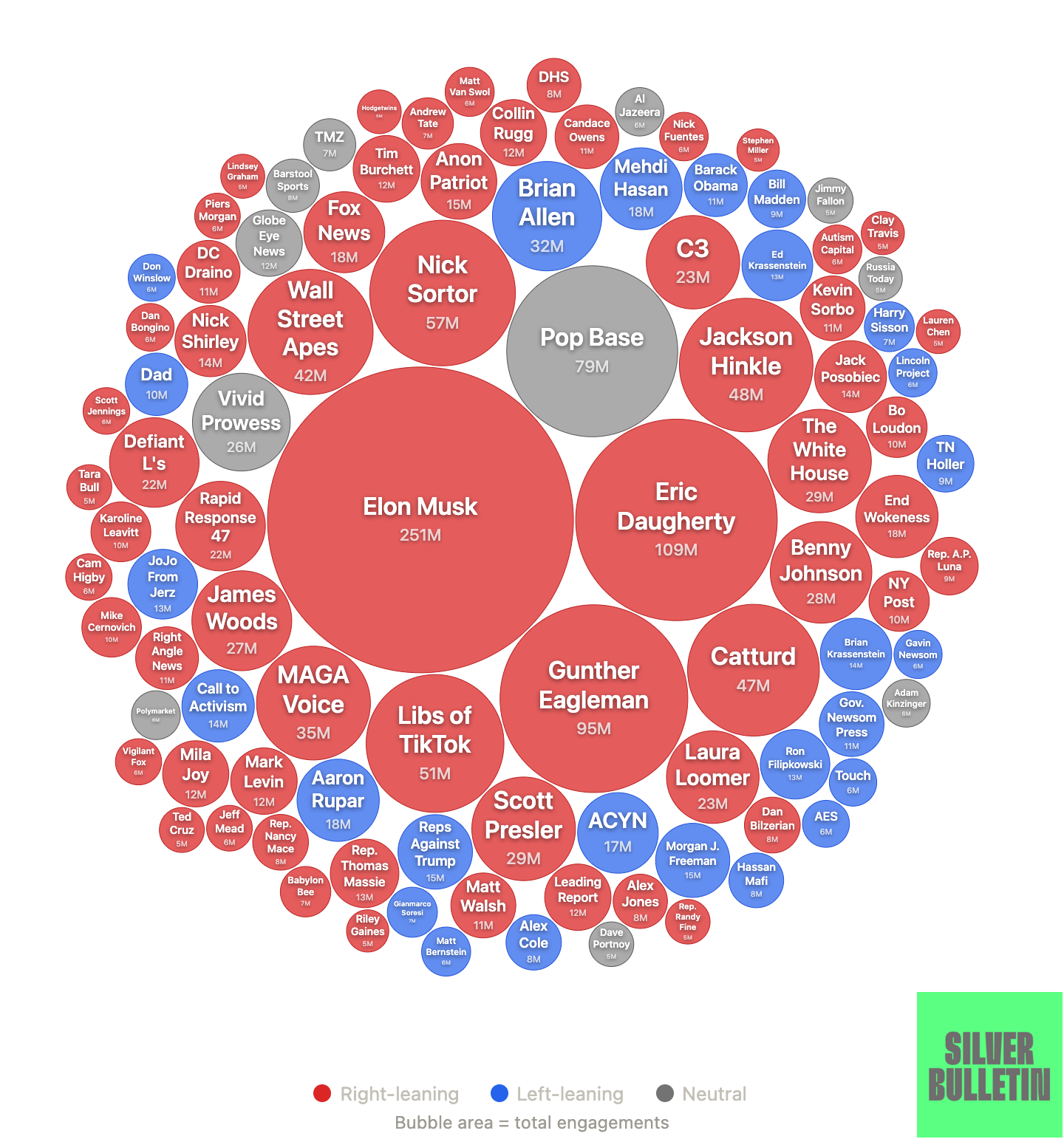

Nate Silver, back in April, under the headline “Social Media Is Turning Into a Freak Show”, where by “social media” he mostly discusses Twitter/X:

But what does that remaining traffic consist of? I recently came across a bubble chart depicting the Twitter accounts that had received the most “engagement” in February 2026. It was depressing: most of the top accounts were extremely low-quality and highly partisan. I hadn’t even heard of many of them and only follow a handful of the top accounts. So I tracked down the original data myself and, with help from Claude, made my own improved version of the chart. Here, voilà, are the Twitter accounts with the most engagement so far in 2026:

It’s not hard to notice that Twitter has become extremely right-leaning. But I’d argue there’s an equally important trend: the top accounts are of incredibly low quality. Elon, with the algorithmic boost he built in for himself, is at the eye of the storm, of course. But “Catturd” literally gets far more engagement than the New York Times, for instance.

There’s a common argument from proponents of the Musk-era X that the only problem is that left-leaning people have abandoned the platform. That the X algorithm is a contest and if only right-leaning accounts are playing, of course they’re winning. This is nonsense. The whole thing is rigged. Elon Musk’s outsized prominence as the most-engaged-with account is proof of that. Twitter existed for 16 years before Musk bought it. He wasn’t even close to the biggest account during that era. Then he bought it. Now his account is the biggest.

As Silver’s data analysis shows, Musk’s X is not just dominated by right-wing accounts, it’s dominated by “who the hell is that?” right-wing slop accounts.

The only way not to lose a rigged game is to refuse to play. X is still a thing. A lot of people, companies, and organizations still post there — treat it like their blogs — exclusively. I still wind up linking to posts on X because that’s where they are. That’s a whole separate discussion. But anyone who’s trying to “compete” there with subject matter that is even vaguely political has no chance of success unless what they’re posting is what Elon Musk wants to see promoted. It’s not like his thumb is on the scale, it’s like an anvil is on the scale. The conundrum is that there are still a lot interesting people posting interesting things there.

- Checking in on Perplexity ★

-

Yours truly, last August:

I can’t see why Apple would want to get involved with a company like this though. Gurman’s report makes it sound like his sources are inside Apple, but man, this “Apple + Perplexity” thing feels more like something Perplexity would be seeding than one that Apple executives would be leaking.

Perplexity is still occasionally in the news (often not in good ways), but it seems to me they’ve slipped into the “afterthought” tier of AI startups — which is exactly why they started leaning into clownish stunts last year. Everyone who previously suggested Apple should — or even might — buy them has gone silent.

Thursday, 4 June 2026

- Some People Rooted for The Empire in ‘Star Wars’, Too ★

-

Ed Morrissey, writing for Hot Air, thinks Scott Pelley got what he deserved and Bari Weiss is doing a good job running CBS News:

And Pelley forgot the Golden Rule: He who has the gold makes the rules. Instead, Pelley convinced himself of his own virtue and torched his own position — and if Bilton’s letter is accurate, in as mean-spirited and conceited a manner as possible. Pelley could have chosen a dignified resignation under protest, but instead pulled a power move in an attempt to intimidate Bilton, Weiss, and Ellison, only to discover that no one feared his absence. In fact, they’re probably happy to cut him loose.

There’s always at least one person in these situations who thinks they’re untouchable. A wise executive knows to start by making an example of that person, and then see how many other people think they’re indispensable. It’s not as if TV news jobs are expanding these days, after all. Pelley’s going to find out the hard way that no one’s paying $5 million a year to emote into a camera from other people’s copy.

It doesn’t even enter this man’s little mind that Pelley wasn’t concerned about his job, wasn’t concerned about his salary, but was concerned only with the integrity of the institution to which he’d committed decades of his career, and that he saw as his duty the need to stand up for his remaining and former colleagues. That Pelley himself has integrity. To the Trump lickspittles, everything is performative. They don’t just lack integrity, they don’t believe integrity is real.

The Scott Pelley story to me is a lesson in how if you work hard enough in your career to get Fuck You Money, the real reward is the day you need to say it, you can.

- The Talk Show Live From WWDC 2026: Tuesday in San Jose ★

-

Location: The California Theatre, San Jose

Showtime: Tuesday, 9 June 2026, 7pm PT (Doors open 6pm)

Special Guest(s): For sure

Price: $45The annual live audience episode of The Talk Show during the week of WWDC. If you can make it, you should come. You’ll even enjoy the prelude, mingling with fellow DF readers and listeners.

- ‘The Insider’ ★

-

All this Sturm und Drang surrounding 60 Minutes has me thinking about a re-watch of The Insider, Michael Mann’s great 1999 movie. Letterboxd’s synopsis: “A research chemist comes under personal and professional attack when he decides to appear in a 60 Minutes exposé on Big Tobacco.” It’s a great movie, and feels apt AF at the moment. Here’s the original segment on 60 Minutes, which ran an entire half hour.

What’s going on today is like if — instead of getting shady, threatening, and litigious — the tobacco companies had just purchased CBS, purged the staff at 60 Minutes, and hired a bunch of pro-cigarette stooges to replace them.

- ‘Microsoft and OpenAI Broke Up — Now They’re Ready to Fight’ ★

-

Hayden Field and Tom Warren, writing for The Verge (gift link):

This year’s Build had the vibe of a freshly single divorcée posting a thirst trap on Instagram. “It’s always fun to be at developer conferences in times of great change,” Microsoft CEO Satya Nadella said onstage Tuesday, adding that events like this are about “coming to grips with the new opportunity.”

AI chief Mustafa Suleyman, in an interview with The Verge, put it even more bluntly.

“The goal is to prove that we can become one of the top four labs in the world,” Suleyman said. “There’s three labs that matter, Google DeepMind, OpenAI, and Anthropic. We are not one of them at the moment, and that’s always been my intention. It’s why I came here. I want to build the very best frontier models in the world, fully multimodal, and in order to do that, we have to prove that we can do everything that we need to from the ground up, and we’re not just going to take from others.”

Refreshingly blunt.

But hasn’t that been Microsoft’s plan for Bing since it was announced in 2009? I mean I guess you can say that Bing is one of the top four search engines in the world. Maybe you can even say it’s one of the top two. But it’s irrelevant and uncompetitive with Google Search.

- Lingon and Lingon Pro 10 ★

-

Peter Borg:

Lingon makes scheduling apps, scripts, shortcuts, and commands feel simple. Create a task in minutes, run it on a schedule, and stay in control.

Lingon helps you run whatever you want whenever you want without living in Terminal. Schedule apps, scripts, shortcuts, and commands with a clear, friendly UI.

Run tasks at specific times, on intervals or at login. Optional notifications make it easy to keep control.

Two separate apps. Lingon is the simpler Mac App Store version and free to use, while Lingon Pro is the advanced one-time purchase with extra power.

Lingon and Lingon Pro are great apps. I’ve been meaning to recommend them for a while.

Back in 2023 I wrote about a problem I was having with Maestral, the incredible “works like Dropbox in the old days” open-source Dropbox client, where Maestral would just silently crash once in a while and I wouldn’t notice for a while. Then I would notice, manually re-launch Maestral, and have to wait while Maestral synced. Or, worse, I’d put a podcast recording in a shared folder and walk away from my computer, and my editor would never get the file because Maestral wasn’t running. My write-up described how I solved the problem with a Keyboard Maestro macro that runs once an hour — it checks if Maestral is running, and if it isn’t, launches it (and writes to a log, to satisfy my own curiosity). Borg wrote to me after I posted that and — very politely — explained that Lingon would make that much simpler.

In addition to creating your own scripts and rules that run periodically, Lingon is great for inspecting all the login items and background agents on your system — whether they’re from Apple or third parties. Poking around at everything Google Gemini installed is what made me think to recommend Lingon today. At the very least you should install the free regular version. It’s just a great Mac utility from a great Mac developer. There’s nothing else like it.

- Remember When Chrome Went Bad on MacOS? ★

-

Loren Brichter, back in 2020:

Short story: Google Chrome installs an updater called Keystone on your computer, which is bizarrely correlated to massive unexplained CPU usage in WindowServer (a system process)[1], and made my whole computer slow even when Chrome wasn’t running. Deleting Chrome and Keystone made my computer way, way faster, all the time.

Long story: I noticed my brand new 16” MacBook Pro started acting sluggishly doing even trivial things like scrolling. Activity Monitor showed nothing from Google using the CPU, but WindowServer was taking ~80%, which is abnormally high (it should use < 10% normally).

Doing all the normal things (quitting apps, logging out other users, restarting, zapping PRAM/SMC, etc) did nothing, then I remembered I had installed Chrome a while back to test a website.

I deleted Chrome, and noticed Keystone while deleting some of Chrome’s other preferences and caches. I deleted everything from Google I could find, restarted the computer, and it was like night-and-day. Everything was instantly and noticeably faster, and WindowServer CPU was well under 10% again.

Not all Mac users, but many, found that just having Chrome installed slowed down their Macs dramatically. Completely uninstalling Chrome — and its pernicious background agents — solved the problem. This years-old “Chrome Is Bad” saga came to mind when I wrote about Google’s Gemini Mac app’s background agents.

It seems as though Google eventually fixed these Chrome bugs — or Apple changed something in a MacOS update that fixed the bugs for them — but I’ve never seen a full explanation of the problem and eventual solution. Does anyone know what happened here?

The main point is it never should have happened in the first place. A third-party app should just be a third-party app — not add components to your system software just so it can update itself when it isn’t running. Background agents and extensions are sometimes necessary to the functionality of a product. Checking for software updates to a browser or AI chatbot, when those apps aren’t running, is not necessary. The golden rule applies: imagine if every app on your system installed its own background agent to check for software updates. Chrome is a popular browser on the Mac, but it’s just a web browser. Other web browsers do just fine checking for updates from the browser itself when they’re running. If the user is actually using an app regularly, it’ll get plenty of chances to check for updates when it’s running. If the user isn’t regularly using an app, why in the world should that seldom-used app have software running all the time in the background?

This sort of chaos is why Apple keeps iOS locked down. There are no third-party login items on iOS that run in the background — let alone ones with no option to disable. No third-party app can do anything that causes the iOS window manager to consume 80 percent of the CPU while ostensibly idle. There are obviously trade-offs here. I rely on a Mac for my workstation because the Mac gives me the power to potentially shoot myself in the foot. But one major reason why iOS is an order of magnitude more popular than MacOS is because you cannot shoot yourself in the foot with it, even though that means you can’t use it to do things that would require that power.

- Google’s Gemini Mac App Is Native, in a Distinctly Google Way, But Annoyingly Presumptuous ★

-

Two months ago Google launched a new native Mac app for Gemini. I’ve been trying it, on and off, since. It’s ... not bad. Certainly better than Claude’s Electron shitbox. But the Gemini app isn’t all that good, either. I’m sticking with ChatGPT, which remains far and away the best native Mac client to an LLM. (And ChatGPT is not that great of a Mac app — it’s just the closest to good of the bunch.)

The thing that really turns me off about the Gemini Mac app is Google’s gall. The Gemini app installs a background helper named “GeminiAppLauncher” in your login items. It also installs “GoogleUpdater” as a process with the privilege to launch in the background whenever it wants. Gemini never asks for permission to install either of these, and, most arrogantly, if you, as an informed user, remove either of them, the Gemini app silently adds them back. There is no setting in Gemini to disable this. There’s a mindset from some big companies that your system is theirs to play with at the system software level. Fuck that. Michael Tsai’s post on the Gemini Mac app links to this thread on MacRumors regarding this pernicious auto-installed and auto-reinstalled login item. Here’s another on Reddit.

Google’s approach to its Mac software is disrespectful and entitled.

I’d have been happy to keep the Gemini app installed if it just sat in my Applications folder when I wasn’t using it. But it doesn’t, and Google shows no signs of caring, so I just deleted it and uninstalled its background launch agents (in

~/Library/LaunchAgents/). Feels great, like I took a much needed shower.(Sidenote: The Gemini Mac app is a native Mac app, but it is ... weird. Gus Mueller poked around at it and found that it’s the product of a Java-to-Objective-C converter that Google made, and much of it was originally written for Android.)

- The AI-Driven Resurgence of Native Mac App Development ★

-

Jason Snell at Six Colors, looking ahead to WWDC next week:

These days, I’m getting emails pitching me for an endless stream of new Mac apps. It’s quite remarkable because there was a period five or ten years ago when it seemed like all app development on Apple’s platforms was focused on iOS. Even more interesting, these are all indie Mac apps that seem to be built using native Mac frameworks, not the product of big corporations that are just rolling their cross-platform development system out everywhere. These apps seem to have a point of view and are focused on the Mac.

Of course, it’s happening because of AI. [...]

Mac users — some of them developers, some of them people who have never written software in their lives — are building apps that fulfill their imaginations.

We now live in an era where, if you can dream an app, you can probably build it. Especially Mac utilities. And who cares more about native Mac software than Mac users? Certainly not those companies that gave up on Mac development and focused all their energies on giant cross-platform code bases to attract venture investment and big payouts.

There are pros and cons to everything, but on the whole, AI-assisted programming has rejuvenated Mac development. It wasn’t moribund, but it was stagnant. And stagnation is the first step toward decline. Now it’s resurgent, and that’s a fun thing to see. And, I think, genuinely important for the future of the platform. I’ve been concerned for years that the biggest problem the Mac faces is that so many new apps for the platform weren’t Mac apps. The Mac has never faced a decline in popularity, but truly native Mac application development (and the skills) did. Now it’s turning around. Mac users are thirsty for Mac apps, and with AI, they can quench their own thirst and tell the dullards promulgating Electron bundles to pound sand.

(And Snell, it turns out, has joined the party.)

Wednesday, 3 June 2026

- Another Gem From the Annals of Nick Bilton Jackassery ★

-

I look forward to pseudoscience like this finally getting some airtime on 60 Minutes. For 58 long years the program has been hopelessly biased toward actual science.

- If There’s One Thing Nick Bilton Knows, It’s Television ★

-

Back in 2011, when he was a tech columnist at The New York Times, Nick Bilton figured out that Apple was soon going to launch an Apple branded-television set, with no remote control. You’d just talk to it. This made no sense of course, as I pointed out.

Bilton closed his column thus:

The company is now close enough that it could announce the product by late 2012, releasing it to consumers by 2013.

It is coming though. It’s not a matter of if, it’s a matter of when.

Maybe it’ll launch in time for Bilton’s first season at the helm of 60 Minutes this fall, with his all-new lineup of correspondents.

- Scott Pelley on Leaving ‘60 Minutes’: ‘Incompetence and Unprofessionalism in the New Management Have Wreaked Havoc’ ★

-

Scott Pelley, in a statement posted on Instagram (which I’ll quote in full, as the original is locked behind a dickwall if you’re not signed in to an Instagram account):

There has never been anything in America like 60 Minutes.

The Sunday tradition is the most successful program of any kind in history. For more than a decade, its innovative growth on every major online platform has extended its reach to countless millions around the world. This spring, at the end of our 58th season, 60 Minutes grew rapidly with an unheard-of 9% jump in viewers on CBS.

“60” has been the number-one program in America for decades because our beloved audience finds integrity, quality, and humanity in our stories. When stewardship of the program passed to my colleagues and me, our responsibility was to expand energetically into a new age of media technology while preserving the values our audience expects. Now, the new owner of our network is casting this legend aside, apparently to curry a moment of favor with the Trump administration.

The waste is heartbreaking.

Last month, 60 Minutes lost its DNA when our entire senior leadership and two of our best on-air correspondents were cruelly fired without cause. Good people were silenced because they stood up for our audience. They stood for fairness against the forces of political bias; they stood for professionalism against chaos.

For my part, new management has instructed me to inject falsehoods and bias into a politically sensitive story. I’ve been told to include assertions that are unverified. To date, in every case, I have managed to ignore these instructions or refuse them.

Recently, politicians have been invited to choose correspondents for interviews on the broadcast. Giving politicians control over 60 Minutes interviews is not how honest journalism is done. Finally, incompetence and unprofessionalism in the new management have wreaked havoc. In a case involving one of my stories, the entire program came within 19 minutes of not getting on the air at all.

At 60 Minutes, we have fought harder than anyone knows to save the program that became an American icon. We owed that to our millions of viewers. I am deeply moved by the thousands of wishes we have received to “keep up the good fight.” Most of my colleagues at CBS News are still in that fight. But now the collapse of values at the top has become untenable. The leadership of 60 Minutes is no longer recognizable. The principles I hold dear are gone, and so I must leave as well.

I depart after 37 years at CBS with one emotion — a heart brimming with gratitude for the men and women of CBS News who encouraged and enriched my work, very often at the risk of their own lives. I pray for a day when those people and their ideals are honored again — a day when sanity, competence, and courage return.

- The ‘60 Minutes’ Purge ★

-

Paramount’s “Press Express” page promoting 60 Minutes still lists all eight correspondents from the 2025–2026 season, the program’s 58th. (Perhaps they fired the person responsible for keeping the cast page up to date.) In the order they appear on Paramount’s listing:

- Lesley Stahl

Scott Pelley— fired today- Bill Whitaker

Anderson Cooper— left on his own after 20 yearsSharyn Alfonsi— fired last week- L. Jon Wertheim

Cecilia Vega— fired last week- Norah O’Donnell

A big part of the brand for 60 Minutes is that the show doesn’t change. Someone who last saw it 40 years ago would instantly recognize it today. There’s no silly fucking theme song. There’s no glossy set. There’s a ticking stopwatch, a logotype set in Microgramma/Eurostile, and correspondents sit against a black background. And correspondents measure their tenure not by years but by decades. Of the original hosts, Harry Reasoner was there for 23 years (and left the cast only upon his death at 68 in 1991), Dan Rather was there for 38 years, Mike Wallace for 40, and Morley Safer for 48. 48 years! Of the current hosts, Lesley Stahl has been there since 1991. I graduated high school that year.

In just six months since David Ellison bought CBS and installed Bari Weiss as editor-in-chief of CBS News, they’ve fired or lost half their on-air talent, and of the four who remain, Wertheim and O’Donnell are only part-time (O’Donnell’s title is “CBS News senior correspondent”, not “60 Minutes correspondent”), Whitaker is 74 years old, and Stahl is 84.

Behind the cameras, longtime executive producer Bill Owens resigned in protest of corporate interference a year ago, in the cowardly run-up to Ellison’s acquisition of CBS. Last week Weiss fired Owens’s successor, Tanya Simon, who had been with the program for 30 years, replacing her with Nick Bilton, who not only had never worked at 60 Minutes, but has never worked in TV news period. Weiss also fired executive editor Draggan Mihailovich, who’d been at the show for 28 years.

It seems untenable for Stahl or Whitaker to remain on the show. Pelley called it what it was in Bilton’s ham-fisted staff meeting Monday: the murder of the institution.

- CBS News Fires Scott Pelley of ‘60 Minutes’ ★

-

Benjamin Mullin and Michael M. Grynbaum:

In a formal letter to Mr. Pelley, which was obtained by The New York Times, Mr. Bilton wrote that the correspondent had been “terminated for cause effective immediately.”

The letter is a must-read. No summary of it can capture just how pathetic a man Nick Bilton is. He disputes nothing Pelley said in the Monday staff meeting, and firing Pelley proves that Pelley was exactly right.

Mr. Pelley, in a telephone interview on Tuesday evening shortly after he was fired, said he had devoted decades of his life to “60 Minutes,” which he said he still cared about deeply.

“I have been in combat in Afghanistan,” Mr. Pelley said. “I have been in combat in Iraq. I have been in the war zone in Ukraine multiple times, risking my life and the happiness of my family because of my devotion to the broadcast.” [...]

Earlier on Tuesday, Mr. Pelley sent a statement to The Times that assailed the new leadership of CBS News, writing that “incompetence and unprofessionalism in the new management have wreaked havoc” at the network.” He added, “The collapse of values at the top has become untenable.” Mr. Pelley also wrote that senior managers at CBS News had pressured him to insert bias into stories for “60 Minutes” this past season, though he did not provide details about specific segments.

I look forward to hearing those segment-specific details. It’s not hard to guess the direction that bias went.

- The Underworld Market to Remove the Recording Indicator Light on Meta Glasses ★

-

Joanna Stern, on YouTube:

People across the country are offering a service on Facebook Marketplace to disable the recording light on Ray-Ban Meta glasses. They call it “Stealth Mode.” Joanna paid $100 for the modification and went inside the growing business of turning smart glasses into covert cameras. She investigates who is doing it, whether it’s legal and what some are doing to try and stop it.

Of course there’s a market for this. But the true chef’s kiss is that the market to find people who offer the service is on ... Facebook Marketplace. Using a Meta platform to find people to hack a Meta device so you can surreptitiously record strangers. So perfectly Meta.

Tuesday, 2 June 2026

- Meta Reportedly Has a Slew of New Smart Glasses Planned for This Year ★

-

James Pero, summarizing for Gizmodo this paywalled report by Jyoti Mann for The Information:

But, wait, there’s more: in addition to the fall releases, The Information reports that Meta also has a pair slated for December, codenamed “Mojito VIP.” There are also two prototypes being tested in the fall, according to the report, including one called “Artemis” and another called “SSG,” which is short for “supersensing glasses.”

The Information previously reported that the “supersensing” pair would have always-on cameras capable of looking at your surroundings without you having to prompt the voice assistant or activate the camera with a button. The idea here is that, with a constant stream of visual information, the smart glasses could be a kind of ambient virtual assistant that remembers where you left your keys or other vision-based reminders.

Spitball: Meta’s entire business is predicated on knowing as much about people as possible. Their interest in building out a virtual “metaverse” world was motivated by the fact they could track everything people do, see, say, and hear there. That didn’t play out so they’re pivoting to building out devices that will let them track everything people do, see, say, and hear in the real world.

- Apple, the Anti-‘Metaverse’ VR Company ★

-

One more bit of “metaverse fever dream” follow-up. The one company in the field that Nick Heer doesn’t mention is Apple, makers of the best-known (albeit not best-selling) virtual reality headset. Think and say what you want about the Vision platform (I still think it’s the first inning of a long game), but no one at Apple ever once gave a hint of endorsing “metaverse” hype. In fact, as I’ve noted before, at a 2022 WSJ event, seven months before Vision Pro was announced and over a year before it was released, Joanna Stern asked Greg Joswiak and Craig Federighi:

Stern: You have to finish this sentence, both of you. The metaverse is...

Joz: A word I’ll never use.

“Fever dream” is right.

- The Metaverse Was Snake Oil for Isolation ★

-

A follow-up point from my post yesterday linking to Nick Heer’s blockbuster “The Metaverse Fever Dream”. In particular, the connection Heer draws between the rise of “metaverse” hype and the pandemic.

I always sort of knew that metaverse hype roughly coincided with the Covid lockdown and our collective period of isolation and loneliness, a year-plus stretch when we relied mostly on computer platforms for nearly all socializing. But here in 2026 it’s now clear that metaverse hype and lockdown-induced isolation coincided precisely. They didn’t roughly overlap; they exactly overlapped. So much so that I’m now wondering if any of the “metaverse” hype would have happened if Covid hadn’t happened. Facebook still likely would’ve renamed itself, because they’d so poisoned the “Facebook” brand itself, but maybe to something other than “Meta”.

We allowed the necessary initial emergency lockdown to extend indefinitely because it seemed like maybe we could get by for a long stretch using technology. The extended lockdown never would have happened if the Covid pandemic had broken out 20 or more years earlier. In 2020 and 2021, we could squint and say, sure, maybe kids can “go to school” via Zoom. We never would have kept all kids home for an entire year pre-Zoom. But the truth is Zoom “school” wasn’t much better than no school at all. Same for Zoom “work collaboration”, and Zoom “friend gatherings”. It was an illusion that today’s technology is even close to a sufficient substitute for being in each others’ physical presence. The siren call of “the metaverse” was exactly what we craved — technology that would be a sufficient substitute for real-world experiences and socializing. The best audience for snake oil are people with actual ailments. And during Covid, we were all ailing socially.

- Scott Pelley Accuses CBS News Boss of ‘Murdering’ ‘60 Minutes’ ★

-

Michael M. Grynbaum and Benjamin Mullin, reporting for The New York Times (gift link):

CBS News faced a fresh wave of turmoil on Monday after Scott Pelley, the “60 Minutes” correspondent, laced into the show’s newly hired executive producer during a staff meeting and accused Bari Weiss, the network’s editor in chief, of “murdering” the longstanding Sunday news program.

In an extraordinary exchange, Mr. Pelley, his newscaster’s baritone sometimes shaking in anger, told Nick Bilton, the new executive producer, that he had “slender” qualifications for his new job and questioned the network’s commitment to the future of the program, according to a recording of the meeting obtained by The New York Times.

The 10 a.m. gathering, held at the program’s Midtown Manhattan headquarters, was intended as a formal introduction to Mr. Bilton, a tech journalist and filmmaker who was appointed last week as part of a major shake-up at “60 Minutes.” CBS fired Tanya Simon, the previous executive producer, and her deputy, along with Sharyn Alfonsi and Cecilia Vega, two of the show’s correspondents — an event that Mr. Pelley referred to as “Black Thursday.”

It’s worth noting that the night before the firings, 60 Minutes won two news Emmys. It’s even more worth noting that 60 Minutes’s TV ratings were up 9 percent year-over-year, and digital video views doubled. In both quality and popularity, the show is thriving, not struggling. (See also: The Late Show With Stephen Colbert was, by far, the top-rated late night talk show.)

“Broadcast is an ice cube that is melting, OK?” Mr. Bilton said, saying the show had to adapt. “Bari loves this institution,” he added. “She loves ’60 Minutes.’”

At that, Mr. Pelley interrupted.

“She is murdering ‘60 Minutes,’” the correspondent said. “She does not love this place. She was brought in to kill it, and she’s been doing exactly that.”

Mr. Pelley added: “She has no qualifications for her job; you have slender qualifications for this job. The changes that she’s made at the ‘Evening News’ have been catastrophic, so why should we expect that any of this is going to be any better?”

Oof.

Oliver Darcy obtained a recording of the entire Bilton-Pelley exchange, and transcribed much of it, but it’s behind the (worth it!) Status paywall.

- Three Ways to Get Paid ★

-

Jason Zweig, back in 2018:

My father, who died in 1981, was an inexhaustible font of wisdom and wit. I don’t know when he told me this particular three-part rule, but I’ve never forgotten it. I tweeted it three years ago, but people keep asking for it in one place, so here it is.

There are three ways to make a living:

Lie to people who want to be lied to, and you’ll get rich.

Tell the truth to those who want the truth, and you’ll make a living.

Tell the truth to those who want to be lied to, and you’ll go broke.

The rest is commentary.

Pairs well with Om Malik’s remarkable line about the success of “the grifters and the hucksters and the influencers selling impossible things” in his “We Are Living in Pinocchio’s World” essay that I linked to yesterday.

- The First-Time-Buyer-Discount Dickover Scheme ★

-

Neil Panchal, on Twitter/X (XCancel link):

Of all the dickovers, the dickover that blueballs you with some first-time buyer incentive. “Sign up and get 10% discount, new accounts only”, the dickover boasts.

Never understood why you’d ever penalize returning customers with a dickover, blue-balling them with 10% off teaser that they’re ineligible for. wtf?

And for first time buyers, they’d always feel left out if they don’t shove their email address in the dickover. The choice is an illusion with a penalty of 10%. But wait… there’s more! You only get a discount code if you, after clicking the confirmation email link, also sign up for their SMS marketing. You just got double dicked.

I fell for this racket once, albeit with my eyes open. Last year I bought a cap from New Era’s website. They offered me some sort of discount for giving them my email address. I knew they were going to get my email anyway because I was going to buy the hat, so I figured why not. Only then — exactly as Panchal describes — did they say I also needed to give them my phone number and grant permission to text me marketing messages. Now I was pissed. I did it anyway, just to see what happened (and get the discount). As soon as I bought the hat, discount applied, I rescinded their permission to send me text messages and marketing emails. (They had already texted me like two marketing messages, in addition to the ones confirming my phone number.) Overall I’d have rather paid a few more dollars than go through the hassle, which is why my standard operating procedure is to decline all such entreaties. A real discount is just offering a lower price. Anything else is a scam of some sort.

But the real problem is that it completely soured my impression of New Era. I am far less likely to purchase from them again. I will eventually buy a New Era cap again — their actual products are excellent, and they are the exclusive maker of official MLB on-field caps — but if I can buy it elsewhere, I will. I’ll go out of my way to avoid buying direct from New Era for the rest of my life.

The marketing shitbirds who press for these schemes — and insist on adding dickovers and dickbars to websites — do so by pointing to data that shows that they do convert some number of users. “It works” they claim, pointing to data. What doesn’t show up in their data are interactions like mine. They don’t have analytics that measure that I now consider their website an antagonist to avoid at all costs.

Monday, 1 June 2026

- ‘The Metaverse Fever Dream’ ★

-

Nick Heer, at Pixel Envy, last week published a remarkable essay surveying — with copious receipts — the rise and fall of “metaverse” hype:

The obsession with the metaverse seems to have solidified in Silicon Valley after Matthew Ball published an essay in January 2020 in which he forecasted that, at the very least…

…it is likely to produce trillions in value as a new computing platform or content medium. But in its full vision, the Metaverse becomes the gateway to most digital experiences, a key component of all physical ones, and the next great labor platform. [...]

Ball published this essay with darkly fortuitous timing. A week earlier, Chinese health authorities had isolated a new strain of coronavirus aggressively spreading in Wuhan; a day before, they published its genetic sequence. Within a couple of months, the world had turned upside down and many of us were suddenly spending our days in a space that felt more virtual than physical. We may have only been working from home — or, at least, those of us who had the option and were not laid off — and socializing over Zoom, all while remembering the last concert we went to or the last time we ate a meal in a restaurant.

Just a tremendous piece of writing and reporting from Heer. What a pile of horseshit “the metaverse” as promulgated by Zuckerberg was. To call what Heer has assembled here, in a compelling narrative to boot, “comprehensive” is a vast understatement. These hucksters were selling a bill of goods and now they’re trying to whistle past their own hype:

As for the futurists like Hackl, who confidently proclaimed the metaverse was “for certain”, they have found an out thanks to its flexible definition. Jeff Barrett, of the Shorty Awards’ “It’s No Fluke” podcast, published a glowing profile of “the Godmother of the Metaverse” earlier this year under the headline “Why Cathy Hackl Keeps Getting the Future Right”. “When enthusiasm cooled and narratives collapsed, many distanced themselves from the space”, writes Barrett, noting with seeming approval that “Hackl did the opposite. She reframed it”. Many people — perhaps everyone, come to think of it — could predict the future if they got to retcon their predictions to fit reality.

Bravo.

Follow-up: “The Metaverse Was Snake Oil for Isolation”.

- ‘If You Take the Weasel Job Then You Must Be the Weasel’ ★

-

Hamilton Nolan, writing at How Things Work:

There are only a few reasons why you might be hired for a prestigious job that you are obviously not qualified for. One is “they have recognized you for the genius that you are.” The urge to conclude that this is, in fact, the reason must be overwhelming, if you are the person in question. But this is rarely the explanation.

Another possibility is “the person who hired you is a fucking idiot.” This happens. A number of current United States cabinet secretaries got their jobs this way.

The most likely reason, though — one that often overshadows the other ones — is, “you are willing to carry out the dirty and distasteful things to come.” This is why weird hirings at the top always provoke dread among all the other employees. Maybe you are a hidden gem, sure, but Occam’s Razor says that you are probably just a hatchet man.

Nick Bilton, a former tech writer for the New York Times and Vanity Fair and maker of a few documentaries, was just hired as the new head of 60 Minutes.

Bilton tried to introduce himself to the (remaining) staff at 60 Minutes this morning and it did not go well.

- ‘We Are Living in Pinocchio’s World’ ★

-

Om Malik:

The Adventures of Pinocchio was published in serial form in 1881, aimed at Italian children in the way the 19th century aimed things at children, full of suffering, consequence, and moral instruction delivered through catastrophe. The puppet is hanged. He is swallowed by a giant fish. He watches companions degrade into beasts of burden. The world he moves through is predatory at every level, and the institutions that should protect him are either absent, corrupted, or actively hostile to his interests. [...]

Most people remember Pinocchio as a story about lying. The nose grows. You get caught. Lesson learned. But that reading misses almost everything Collodi was actually doing. The book is a close study of a society where deception has gone ambient, woven into every institution, every transaction. Courts punish victims. Authority figures perform competence without exercising it. Experts are decorative. Society holds together through spectacle and habit rather than accountability. Into this environment, a naive creature is released, constitutionally unable to resist a good story about easy reward.

The nose is the least interesting lie in the book. The interesting lies are the ones that work.

I’m not sure which sphere of interest this essay applies better to: post-AI tech, or post-Trump politics. I mean, goddamn, what a paragraph this one is:

The grifters and the hucksters and the influencers selling impossible things succeed because audiences reward certainty and punish doubt. They honor confidence and resist complication. A clean story about a genius who will fix everything travels faster than a difficult story about tradeoffs. The Field of Miracles stays open because people keep wanting to bury their coins there.

- Amazon Made AI Podcasts for Products ★

-

Katie Notopoulos, a month ago at Business Insider:

Amazon has launched a new feature that uses AI to generate a short, podcast-like audio segment where two “hosts” discuss the merits and reviews of a specific product.

I think it could be one of the funniest, closest endpoints to human civilization we’ve seen yet in our new AI-enabled world. If this sounds a little confusing, here’s an example. I tried it out for diaper rash cream, and, voila! A podcast! (Sound on.)

I don’t know what’s worse: that anyone at Amazon thought actual people would really listen to these, or if actual people really are listening to them.

Sunday, 31 May 2026

- The Talk Show Live From WWDC 2026: Tuesday June 9 ★

-

Location: The California Theatre, San Jose

Showtime: Tuesday, 9 June 2026, 7pm PT (Doors open 6pm)

Special Guest(s): For sure

Price: $45The annual live audience episode of The Talk Show during the week of WWDC. If you can make it, you should come. You’ll even enjoy the prelude, mingling with fellow DF readers and listeners.

Also: at least one sponsorship slot is still available. If you’ve got a product or service you’d like to see me promote at the start of the show, shoot me an email.

The Fonts of the U.S. Federal Courts

Friday, 22 May 2026

The 13 circuits of the U.S. federal courts of appeals operate with a fair amount of independence, including their typographic choices. I was reminded of this today while reading the aforelinked decision from the Ninth Circuit in Epic v. Apple, because the Ninth Circuit sets their decisions in Times New Roman — a font that came up back in December in the context of the Trump State Department.

Long argument short, Times New Roman isn’t bad, but it isn’t good. It is the median choice. But most of the circuit courts use it: the Third, Fourth, Sixth, Eighth, Ninth, Tenth, and Eleventh. It could be worse: the First circuit not only uses Courier New (the worst version of Courier, so of course it’s the one Microsoft shipped with Windows), but fully justifies their text — contrary to the nature of a monospaced font. (The Fourth circuit only recently switched from Courier New to Times New Roman — an upgrade, to be sure, but a disappointingly mediocre one.) It could be better: the Second and Seventh use Palatino. (Note how much better that Seventh Circuit decision looks than the Second’s, with its wider margins creating a narrower column of text.)

But it can be much better. The Fifth Circuit was long typographically superior to its peers, using Century Schoolbook — a highly legible font with great tradition and the right vibe. But in 2020, the Fifth Circuit upgraded, switching to Equity, Matthew Butterick’s excellent type family (which, of course, is used throughout Butterick’s own web book, Typography for Lawyers). Here’s a before and after tweet noting the change. The results are typographically sublime (including improved margins).

The gold standard is the U.S. Supreme Court, which uses Century Schoolbook. Yes, I just praised the Fifth Circuit’s change from Century Schoolbook to Equity as an upgrade, but tradition and consistency have their place. The Supreme Court’s typographic style has been stunningly consistent for — no pun intended — well over a century. (If only that were true of their recent decisions. Rimshot.) Here is last month’s Louisiana v. Callais decision — the gerrymandering / redistricting case. Here is 1954’s Brown v. Board of Education. I’d give the nod to the older one, which made better use of proper small caps, but the overall consistency is obvious.

Here is the 2026 edition of the Rules of the Supreme Court. Not only does the Court use Century Schoolbook for its own decisions, it requires submissions to the Court to use the same (p. 44):

The text of every booklet-format document, including any appendix thereto, shall be typeset in a Century family (e. g., Century Expanded, New Century Schoolbook, or Century Schoolbook) 12-point type with 2-point or more leading between lines. Quotations in excess of 50 words shall be indented. The typeface of footnotes shall be 10-point type with 2-point or more leading between lines. The text of the document must appear on both sides of the page.

Every booklet-format document shall be produced on paper that is opaque, unglazed, and not less than 60 pounds in weight, and shall have margins of at least three-fourths of an inch on all sides. The text field, including footnotes, may not exceed 4⅛ by 7⅛ inches.

Why the extra one-eighths of an inch instead of just 4 × 7? I don’t know. But 4⅛ × 7⅛ is exactly the size of the text field in the court’s own decisions.

Now compare the current 2026 rulebook to this edition printed in 1910 (with rules adopted in 1884). The consistency is striking — but, once again, the older version makes better use of small caps and just has a bit more vim and vigor to it. Just look at page 44, for example. It’s perfect. The current Court’s document formatters should aspire only to more closely ape the confidence and sturdiness of this older one. A century from now, U.S. Supreme Court decisions should look as similar to today’s as today’s do to those from a century ago.

The various circuit courts using lesser typefaces, looser margins, and lazier formatting should follow the Fifth’s lead and get their shit together. Tuck your shirt in, comb your hair, straighten your tie, and pop a mint in your mouth. If you’re a United States federal court, your typographic style should reflect that.

Back in 2020, Butterick took a well-deserved victory lap when the Fifth Circuit adopted Equity.1 He quoted Fifth Circuit Judge Don Willett, a typography fan who spearheaded the restyling project, on its rationale. Willett wrote:

[Why] did the circuit devote finite judicial energy to swapping typefaces and widening margins? Simple answer: Our job is not just to present clear opinions, but to present our opinions clearly. Getting the law right is, of course, our tip-top priority. Nothing matters more. ... But good enough is never good enough. Our work is consequential, impacting the lives and livelihoods of real people walloped by real problems in the real world. The stakes are high, and we must present our best opinion, not merely a passable one. And that presentation begins before the first word is ever read. ★

-

In the very same post, Butterick sings the praises of the Apple Extended Keyboard II, and notes that he has several spares in reserve. I do keenly intend to take Butterick up on his standing offer to dine when next I’m in Los Angeles, but I worry that if we meet, we’ll trigger some sort of calamitous singularity of aligned taste. ↩︎